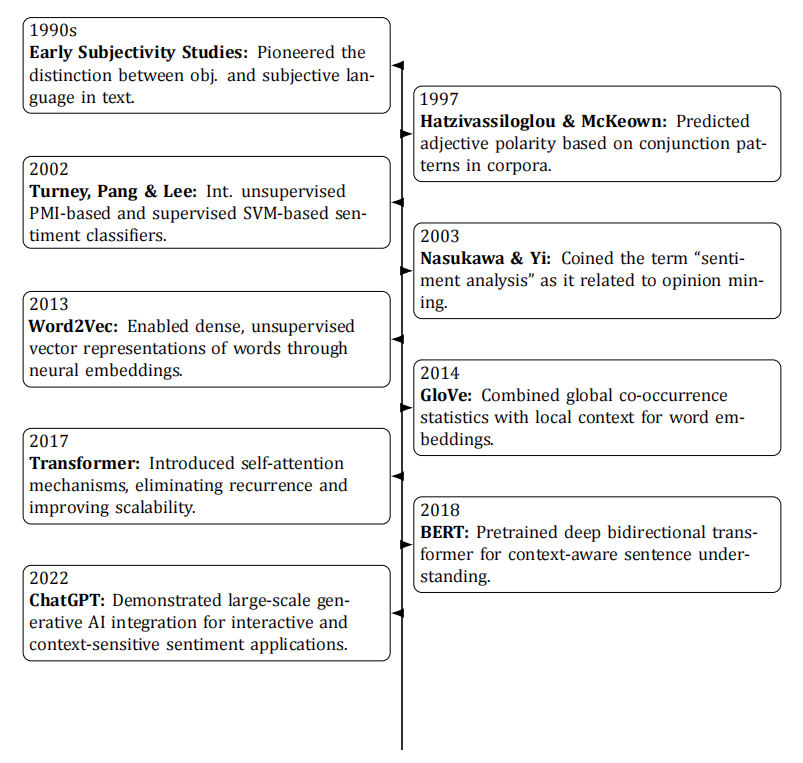

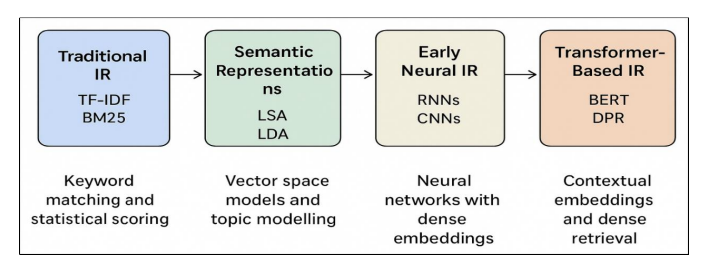

Understanding public opinion at scale is both a scientific challenge and a practical necessity in the digital era, as the proliferation of online communication platforms has created unprecedented opportunities to monitor attitudes in near real time. Early work in subjectivity detection and semantic orientation laid the methodological foundations for automated sentiment extraction, focusing on distinguishing objective from subjective content and determining polarity. Contemporary applications, however, face far more complex requirements, demanding systems capable of processing massive, noisy, and dynamic data streams while integrating multimodal signals from text, images, audio, and video. This paper presents a historical review of sentiment analysis and opinion monitoring through the lens of artificial intelligence, tracing developments from the early 1990s to the present and classifying approaches from lexicon‑based heuristics to classical machine learning, deep neural architectures, transfer learning, and multimodal fusion, with an emphasis on both technical and conceptual advances. Extensive tables summarize algorithms, datasets, and case studies across various domains, including politics, finance, and entertainment, highlighting practical lessons and performance trends. The review also addresses pressing ethical concerns, including bias, fairness, and transparency, and considers the implications of rapidly evolving AI capabilities. We conclude by outlining future directions that emphasize adaptability, context awareness, and the seamless integration of emerging technologies into scalable and reliable opinion analysis systems.

723 (Views)

723 (Views)  673 (Downloads)

673 (Downloads)

723 (Views)

723 (Views)  673 (Downloads)

673 (Downloads)

553 (Views)

553 (Views)  594 (Downloads)

594 (Downloads)

407 (Views)

407 (Views)  308 (Downloads)

308 (Downloads)

826 (Views)

826 (Views)  906 (Downloads)

906 (Downloads)

257 (Views)

257 (Views)  157 (Downloads)

157 (Downloads)

263 (Views)

263 (Views)  337 (Downloads)

337 (Downloads)

652 (Views)

652 (Views)  724 (Downloads)

724 (Downloads)

236 (Views)

236 (Views)  111 (Downloads)

111 (Downloads)